Artificial intelligence (AI) refers to the ability of computer systems to mimic human cognitive functions like learning, reasoning, and problem-solving. AI systems perceive their environment and use learning and intelligence to make decisions that increase the likelihood of achieving specific goals. It's a multidisciplinary field encompassing engineering, mathematics, and computer science, focused on creating software and methods that enable machines to act intelligently.

Mentioned in this timeline

PlayStation is a video game brand owned by Sony Interactive...

Fox News Channel FNC is an American multinational conservative news...

Facebook is a social media and networking service created in...

Nvidia Corporation based in Santa Clara California is a prominent...

The United States of America is a federal republic primarily...

Google LLC is a multinational technology corporation specializing in a...

Trending

David John Matthews is a prominent American musician best known as the lead vocalist songwriter and guitarist for the highly...

2 months ago Patton Oswalt narrates Stephen King & Peter Straub's 'Other Worlds Than These' audiobook.

2 months ago Sarah Pidgeon's 2026 style: CBK fashion, minimalist looks, and beauty recreations.

1 year ago Pope Leo XIV, White Sox Superfan, Gains Popularity After World Series Appearance

1 year ago Natasha Cloud Joins Liberty, Aims to Elevate WNBA and Championship Hopes.

1 year ago Darren Criss and Jinkx Monsoon Star in 'Hamilton' Ham4Ham Return Performance

Popular

Michael Joseph Jackson the King of Pop was a highly...

Graham Cunningham Platner is an American oyster farmer and Marine...

Ken Paxton is an American politician and lawyer serving as...

Steve Hilton is a British-American conservative political commentator and former...

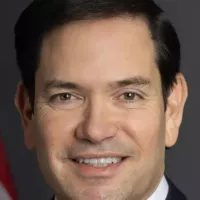

Marco Rubio is an American politician and diplomat currently serving...

Ron Harper is a retired American professional basketball player who...