Deep learning, a subfield of machine learning, uses neural networks with multiple layers to perform tasks like classification, regression, and representation learning. Inspired by the biological brain, it involves training artificial neurons arranged in layers to process data. The term "deep" refers to the multiple layers in the network. Deep learning methods can be supervised, semi-supervised, or unsupervised.

2 hours ago : AI Deep Learning: Protecting California's Coast and Trending on Google Trends

AI deep learning assists UCLA scientists in protecting California's coastal ecosystems. The technology also trends in Google Trends. Deep learning helps mapping kelp forests.

1948: Turing's work on Intelligent Machinery

In 1948, Alan Turing produced work on "Intelligent Machinery" containing ideas related to artificial evolution and learning RNNs, but it was not published in his lifetime.

1958: Rosenblatt proposed the perceptron

In 1958, Frank Rosenblatt proposed the perceptron, an MLP with 3 layers.

1960: Kelley developed a continuous precursor of backpropagation

In 1960, Henry J. Kelley had a continuous precursor of backpropagation in the context of control theory.

1960: R. D. Joseph's network

In 1960, R. D. Joseph created a network "functionally equivalent to a variation of" this four-layer system.

1962: Rosenblatt published a book introducing variants and computer experiments

In 1962, Frank Rosenblatt published a book that also introduced variants and computer experiments, including a version with four-layer perceptrons "with adaptive preterminal networks" where the last two layers have learned weights.

1962: Rosenblatt introduced the terminology 'back-propagating errors'

In 1962, Rosenblatt introduced the terminology "back-propagating errors", but he did not know how to implement this.

1965: First working deep learning algorithm published

In 1965, Alexey Ivakhnenko and Lapa published the Group method of data handling, the first working deep learning algorithm to train arbitrarily deep neural networks.

1967: First deep learning MLP trained by stochastic gradient descent

In 1967, Shun'ichi Amari published the first deep learning multilayer perceptron (MLP) trained by stochastic gradient descent.

1969: Introduction of the ReLU activation function

In 1969, Kunihiko Fukushima introduced the ReLU (rectified linear unit) activation function.

1970: First publication of modern backpropagation

In 1970, Seppo Linnainmaa first published the modern form of backpropagation in his master thesis.

1971: Republishing of backpropagation

In 1971, G.M. Ostrovski et al. republished backpropagation.

1971: Early recurrent neural networks published

In 1971, Kaoru Nakano published early recurrent neural networks.

1971: Deep network with eight layers described

In 1971, a paper described a deep network with eight layers trained by the Group method of data handling.

1972: Adaptive RNN architecture

In 1972, Shun'ichi Amari created an adaptive RNN architecture.

1974: Werbos thesis

In 1974, Paul Werbos PhD thesis did not yet describe the backpropagation algorithm.

1979: Introduction of the Neocognitron

In 1979, Kunihiko Fukushima introduced the Neocognitron, a deep learning architecture for CNNs (convolutional neural networks) with convolutional layers and downsampling layers, though not trained by backpropagation.

1982: Hopfield republishes Amari's RNN

In 1982, John Hopfield republished Shun'ichi Amari's learning RNN.

1982: Werbos applied backpropagation to neural networks

In 1982, Paul Werbos applied backpropagation to neural networks.

1985: Boltzmann machine learning algorithm

In 1985, Boltzmann machine learning algorithm, was briefly popular before being eclipsed by the backpropagation algorithm in 1986.

1986: Popularization of backpropagation

In 1986, David E. Rumelhart et al. popularised backpropagation.

1986: Introduction of the term deep learning

In 1986, Rina Dechter introduced the term "deep learning" to the machine learning community.

1986: Jordan network

In 1986, the Jordan network, an early influential work, applied RNN to study problems in cognitive psychology.

1986: Backpropagation Eclipses Boltzmann Machine Learning Algorithm

In 1986, the backpropagation algorithm eclipsed the Boltzmann machine learning algorithm.

1988: CNN applied to alphabet recognition

In 1988, Wei Zhang applied a backpropagation-trained CNN to alphabet recognition.

1988: Protein structure prediction

In 1988, a network became state of the art in protein structure prediction, an early application of deep learning to bioinformatics.

1989: First proof of the universal approximation theorem

In 1989, George Cybenko published the first proof of the universal approximation theorem for sigmoid activation functions in feedforward neural networks.

1989: Creation of LeNet for ZIP code recognition

In 1989, Yann LeCun et al. created a CNN called LeNet for recognizing handwritten ZIP codes on mail.

1990: CNN implemented on optical computing hardware

In 1990, Wei Zhang implemented a CNN on optical computing hardware.

1990: Elman network

In 1990, the Elman network, an early influential work, applied RNN to study problems in cognitive psychology.

1991: Publication of adversarial neural networks

In 1991, Jürgen Schmidhuber also published adversarial neural networks that contest with each other in the form of a zero-sum game, called "artificial curiosity".

1991: Proposal of a hierarchy of RNNs

In 1991, Jürgen Schmidhuber proposed a hierarchy of RNNs pre-trained one level at a time by self-supervised learning, called a "neural history compressor".

1991: Generalization of the universal approximation theorem

In 1991, Kurt Hornik generalized the universal approximation theorem to feed-forward multi-layer architectures.

1991: Implementation of the neural history compressor

In 1991, Sepp Hochreiter implemented the neural history compressor in his diploma thesis and identified and analyzed the vanishing gradient problem.

1991: CNN applied to medical image segmentation

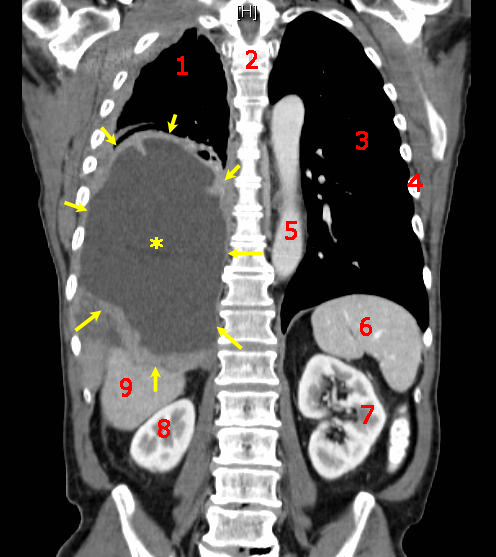

In 1991, a CNN was applied to medical image object segmentation and breast cancer detection in mammograms.

1991: TIMIT Dataset error rates

Since 1991, the error rates listed below, including these early results and measured as percent phone error rates (PER), have been summarized.

1993: Neural history compressor solves a "Very Deep Learning" task

In 1993, a neural history compressor solved a "Very Deep Learning" task that required more than 1000 subsequent layers in an RNN unfolded in time.

1994: Werbos thesis reprinted

In 1994, Paul Werbos' 1974 PhD thesis was reprinted.

1995: Description of brain organization

A 1995 description stated, "...the infant's brain seems to organize itself under the influence of waves of so-called trophic-factors ... different regions of the brain become connected sequentially, with one layer of tissue maturing before another and so on until the whole brain is mature".

1995: Development of Architectures and Methods Inspired by Statistical Mechanics

Between 1985 and 1995, Terry Sejnowski, Peter Dayan, Geoffrey Hinton, and others developed architectures and methods, including the Boltzmann machine, restricted Boltzmann machine, Helmholtz machine, and the wake-sleep algorithm, inspired by statistical mechanics for unsupervised learning of deep generative models.

1995: Publication of LSTM

In 1995, Long Short-Term Memory (LSTM) was published, which can learn "very deep learning" tasks with long credit assignment paths.

1998: SRI International's Success with DNNs in Speech Processing

In 1998, SRI International, funded by the US government's NSA and DARPA, reported significant success with deep neural networks in speech processing in the NIST Speaker Recognition benchmark. It was deployed in the Nuance Verifier, representing the first major industrial application of deep learning.

1998: Application of LeNet-5 by banks

In 1998, Yann LeCun et al.'s LeNet-5, a 7-level CNN that classifies digits, was applied by several banks to recognize hand-written numbers on checks digitized in 32x32 pixel images.

1999: Introduction of the forget gate in LSTM

In 1999, the "forget gate" was introduced, becoming the standard RNN architecture for LSTM.

2000: Deep learning in artificial neural networks

In 2000, Igor Aizenberg and colleagues introduced the term "deep learning" to artificial neural networks in the context of Boolean threshold neurons.

2003: LSTM becomes competitive

In 2003, LSTM became competitive with traditional speech recognizers on certain tasks.

2003: LSTM introduced

LSTM introduced around 2003–2007, accelerated progress in eight major areas.

2004: Early work on hardware advances

In 2004, some early work related to hardware advances in deep learning was done.

2006: Combination of LSTM with CTC

In 2006, Alex Graves, Santiago Fernández, Faustino Gomez, and Schmidhuber combined LSTM with connectionist temporal classification (CTC) in stacks of LSTMs.

2006: Development of Deep Belief Networks

In 2006, Geoff Hinton, Ruslan Salakhutdinov, Osindero and Teh developed deep belief networks (DBNs) for generative modeling.

2007: LSTM introduced

LSTM introduced around 2003–2007, accelerated progress in eight major areas.

2008: TAMER framework developed

In 2008, researchers at The University of Texas at Austin (UT) developed a machine learning framework called Training an Agent Manually via Evaluative Reinforcement, or TAMER.

2009: First RNN to win a pattern recognition contest

In 2009, LSTM became the first RNN to win a pattern recognition contest, in connected handwriting recognition.

2009: GPU-based deep learning demonstration

In 2009, Raina, Madhavan, and Andrew Ng reported a 100M deep belief network trained on 30 Nvidia GeForce GTX 280 GPUs, an early demonstration of GPU-based deep learning.

2009: NIPS Workshop on Deep Learning for Speech Recognition

The 2009 NIPS Workshop on Deep Learning for Speech Recognition explored the limitations of deep generative models of speech. At the workshop, it was discovered that using large amounts of training data for straightforward backpropagation with DNNs produced lower error rates than Gaussian mixture model (GMM)/Hidden Markov Model (HMM).

2009: DNNs for speaker recognition

The debut of DNNs for speaker recognition in 2009.

2010: Start of industrial applications of deep learning to large-scale speech recognition

Around 2010, industrial applications of deep learning to large-scale speech recognition started.

2010: Extension of Deep Learning to Large Vocabulary Speech Recognition

In 2010, researchers extended deep learning from TIMIT to large vocabulary speech recognition, by adopting large output layers of the DNN based on context-dependent HMM states constructed by decision trees.

2011: DanNet achieves superhuman performance

In 2011, DanNet, a CNN, achieved superhuman performance in a visual pattern recognition contest, outperforming traditional methods. Max-pooling CNNs on GPU significantly improved performance.

2011: Superhuman performance in traffic sign recognition

In 2011, deep learning-based image recognition first became "superhuman" in recognition of traffic signs.

2011: DNNs for speech recognition

The debut of DNNs for speech recognition around 2011 accelerated progress in eight major areas.

October 2012: AlexNet wins ImageNet competition

In October 2012, AlexNet won the ImageNet competition by a significant margin over shallow machine learning methods.

2012: AlexNet Computation

In 2012, OpenAI estimated the hardware computation used in the largest deep learning projects from AlexNet.

2012: FNN learns to recognize high-level concepts

In 2012, an FNN created by Andrew Ng and Jeff Dean learned to recognize higher-level concepts, such as cats, by watching unlabeled images taken from YouTube videos.

2013: ANNs misclassify minuscule perturbations of correctly classified images

In 2013, some deep learning architectures misclassified minuscule perturbations of correctly classified images, displaying problematic behaviors.

2014: Generative adversarial network (GAN) becomes state of the art

In 2014, Generative adversarial network (GAN) became state of the art in generative modeling.

2014: Superhuman performance in human face recognition

In 2014, deep learning-based image recognition became "superhuman" in the recognition of human faces.

2014: ANNs classify unrecognizable images confidently

In 2014, some deep learning architectures confidently classified unrecognizable images as belonging to a familiar category of ordinary images, displaying problematic behaviors.

2014: Use of adversarial neural networks in GANs

In 2014, the principle of adversarial neural networks was used in generative adversarial networks (GANs).

2014: Training "very deep neural network"

In 2014, the state of the art was training "very deep neural network" with 20 to 30 layers.

May 2015: Highway network is published

In May 2015, the highway network was published as a new technique to train very deep networks.

Dec 2015: Residual neural network (ResNet) is published

In December 2015, the residual neural network (ResNet) was published as a new technique to train very deep networks. ResNet behaves like an open-gated Highway Net.

2015: Deep learning impacts the field of art

Around 2015, deep learning started impacting the field of art. Early examples included Google DeepDream and neural style transfer, which were based on pretrained image classification neural networks, such as VGG-19.

2015: AlphaGo beats a professional Go player

In 2015 DeepMind demonstrated their AlphaGo system, which learned the game of Go well enough to beat a professional Go player. Google Translate also used a neural network to translate between more than 100 languages.

2015: Diffusion models developed

In 2015 Diffusion models were developed.

2015: Google's speech recognition improved by 49%

In 2015, Google's speech recognition improved by 49% by an LSTM-based model, which they made available through Google Voice Search on smartphone.

2016: ANNs used to doctor images

In 2016 researchers used one ANN to doctor images in trial and error fashion, identify another's focal points, and thereby generate images that deceived it.

2016: Sounds could make Google Now open web address

In 2016, researchers found that specific sounds could manipulate the Google Now voice command system to open a specific web address, posing a security risk by potentially directing users to malicious websites.

2017: Neural networks perform beyond humans

As of 2017, neural networks typically have a few thousand to a few million units and millions of connections and can perform many tasks at a level beyond that of humans.

2017: Graph neural networks used to predict properties of molecules

In 2017 graph neural networks were used for the first time to predict various properties of molecules in a large toxicology data set.

2017: Stickers cause ANN to misclassify stop signs

In 2017 researchers added stickers to stop signs and caused an ANN to misclassify them.

2017: Covariant.ai was launched

In 2017, Covariant.ai was launched, which focuses on integrating deep learning into factories.

2017: AlphaZero Computation

In 2017, OpenAI estimated the hardware computation used in the largest deep learning projects up to AlphaZero and found a 300,000-fold increase in the amount of computation required since AlexNet, with a doubling-time trendline of 3.4 months.

2018: Deep TAMER introduced

In 2018 a new algorithm called Deep TAMER was later introduced during a collaboration between U.S. Army Research Laboratory (ARL) and UT researchers.

2018: Nvidia's StyleGAN achieves excellent image quality

In 2018, Nvidia's StyleGAN, based on the Progressive GAN, achieved excellent image quality. Image generation by GAN reached popular success.

2018: Yoshua Bengio, Geoffrey Hinton and Yann LeCun awarded the Turing Award

Yoshua Bengio, Geoffrey Hinton and Yann LeCun were awarded the 2018 Turing Award for breakthroughs that have made deep neural networks a critical component of computing.

2019: GPUs displace CPUs for training AI

By 2019, graphics processing units (GPUs), often with AI-specific enhancements, had displaced CPUs as the dominant method for training large-scale commercial cloud AI.

2019: Generative neural networks used to produce molecules

In 2019, generative neural networks were used to produce molecules that were validated experimentally all the way into mice.

2020: AlphaFold achieves high accuracy in protein structure prediction

In 2020, AlphaFold, a deep-learning based system, achieved a level of accuracy significantly higher than all previous computational methods in predicting protein structure.

2020: Experiments with large-area active channel material published

In 2020, Marega et al. published experiments with a large-area active channel material for developing logic-in-memory devices and circuits based on floating-gate field-effect transistors (FGFETs).

2021: Release of Epigenetic aging clock

In 2021, An epigenetic aging clock of unprecedented accuracy was planned to be released for public use by a Deep Longevity

2021: Integrated photonic hardware accelerator proposed

In 2021, J. Feldmann et al. proposed an integrated photonic hardware accelerator for parallel convolutional processing, highlighting its advantages over electronic counterparts.

2022: DALL·E 2 and Stable Diffusion systems released

In 2022 systems such as DALL·E 2 and Stable Diffusion were released.

November 2023: GNoME discovers over 2 million new materials

In November 2023, Google DeepMind and Lawrence Berkeley National Laboratory announced that they had developed an AI system known as GNoME, which discovered over 2 million new materials. The system's predictions were validated through autonomous robotic experiments.

Mentioned in this timeline

Nvidia is a prominent American technology company specializing in the...

Google LLC is a multinational technology corporation specializing in a...

CNN Cable News Network is an American multinational news media...

OpenAI is an American AI research organization with both non-profit...

Alan Mathison Turing was a highly influential English mathematician computer...

Cancer is a collection of diseases characterized by the uncontrolled...

Trending

40 minutes ago Kathy Ireland Invests in Capacity, AI Support Automation Leader, in Strategic Move.

40 minutes ago Lori Loughlin makes rare red carpet appearance with daughters after seven years.

40 minutes ago Catherine Bell stuns at 57: 'JAG' reunion highlights ageless beauty.

41 minutes ago Whitlock criticizes Jordan's Oscar, Cruz questions Best Picture winners, inclusion criteria debated.

2 hours ago Stephen A. Smith Praises LeBron James' Leadership Amid Lakers' Success.

2 hours ago Kehlani Announces Self-Titled Fifth Album Set for April Release: Details Revealed

Popular

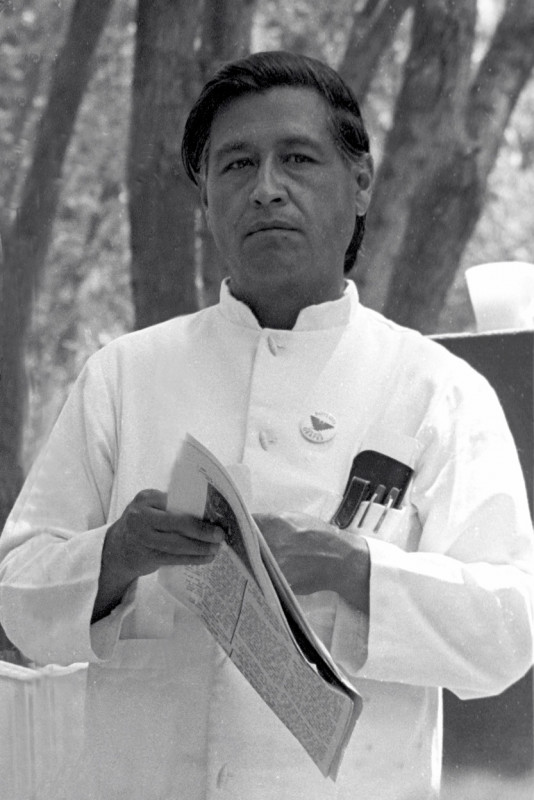

Cesar Chavez was a prominent American labor leader and civil...

Sean Penn is a highly acclaimed American actor and film...

Paula White-Cain is a prominent American televangelist and key figure...

Chaz Bono is an American writer musician and actor known...

Joseph Clay Kent is an American politician and former military...

Benjamin Bibi Netanyahu is an Israeli politician and diplomat currently...